Background

Welcome to new project details on Forensic sketch to image generator using GAN. Image processing has been a crucial tool for refining the image or we can say, to enhance the image. With the development of machine learning tools, the image processing task has been simplified to great extent. Automatic face sketch-photo generation /synthesis and identification has been always an important topic in computer vision, image processing and machine learning. As our project falls under the same domain, it takes help of classes of machine learning algorithm/system for the transformation of person’s sketch into the photograph which has

the characteristic or feature associated with the sketch. This way, the realistic photograph for any forensic sketch can be obtained easily with precise detail and in less time. The entire process is automated so there is not much human effort while using the system. Narrowing down the details of sketch to photo generation into few lines, it includes following models:

• A Generator that takes forensic sketch as its input and provides realistic photograph of the sketch as output.

• A Discriminator that is used to train the generator or to simply put, to increase the accuracy of the photograph being generated by the generator.

These two components in the system works together and against each other to generate the precise photograph of the forensic sketch fed into the system. The core idea behind the adversarial network is to pit two classes of neural networks against each other. Generative adversarial networks (GANs) are based on a game theoretic scenario in which the generator network must compete against an adversary. The generator network directly produces samples. Its adversary, the discriminator network, attempts to distinguish between samples drawn from the training data and samples drawn from the generator thus making it discriminator(classifier) as its name suggests. We can consider the following analogy:

We can think of the generator as being like a counterfeiter, trying to make fake money, and the discriminator as being like police, trying to allow legitimate money and catch counterfeit money. To succeed in this game, the counterfeiter must learn to make money that is indistinguishable from genuine money, and the generator network must learn to create samples that are drawn from the same distribution as the training data.

The two models, the generator and discriminator, are trained together. The generator generates a batch of samples (in our case photographs) and these, along with real examples (photographs) from the domain, are provided to the discriminator and classified as real or fake. The discriminator is then updated to get better at discriminating real and fake samples in the next round, and importantly, the generator is updated based on how well, or not, the generated samples fooled the discriminator. CGAN (Conditional Generative Adversarial Network) that our system employs is simply the extension to the GAN is in their use for conditionally generating an output. In our system, for generating photo from forensic sketch, the discriminator is provided examples of real and generated photos of forensic sketches and the generator is provided with a random vector from the latent space (or noise) as well as (conditioned on) forensic sketches as input.

The system works with image data so it uses Convolutional Neural Networks, or CNNs (neural nets specialized in image generation and classification), as the generator and discriminator models. Thus, Conditional Generative Adversarial Network (CGAN) conditioned on facial attributes/sketches is the backbone of the project or we can designate as a robot artist specialized in generating photo realistic images from the sketch.

Problem Statement

The sketch of a person is always inferior to a photograph that provides a detailed information of the facial attribute of a person including its skin tone, color of hair and other characteristic that remains missing in a sketch. There is hardly a case where the person can’t be recognized with a photograph but is recognized with his/her sketch. The fact is other way around. So sketch can be crucial element in law enforcement. The system existing in present takes the use of Photoshop that usually requires a Photoshop professional to fill in the sketch with textures and color.

This is a cumbersome task and can take more than an hour to generate a decent photograph from the sketch. Time is critical and there is no single criminal such that the task will be completed after synthesizing one photograph. The law enforcers has to deal with hundreds of criminal sketches on daily or weekly basis. In law enforcement, in most cases, the photo of a suspect is not available in the police database and therefore, the forensic sketch, which is drawn by a police artist based on an eyewitness testimony, is the only clue to identify the suspect.

However, recognition of the suspect using a face sketch is much harder than a face photo because of the significant differences between the two modalities, such as the texture and geometric mismatching, which reduces the chance of identifying the suspect by person or from a mugshot database. In these cases, automatic face sketch-photo synthesis comes handy. The existing methods are still hazy and mostly suffers from major drawbacks. So here is where our system fills the void. Needless to say it is also cost effective without any compromise in the output.

Purpose/Scope

This is proposal for the design and development of software named as “Forensic sketch to image generator using GAN” as a team work for minor project. Forensic sketch to photo generation can be a critical application in law enforcement and digital entertainment industry. So we can foresee this tiresome task of generating photograph from image using traditional techniques can be dominated with this technique that may not only reduce the time of synthesis of photograph but also reduce the human effort for a greater good.

Objectives:

To enforce facial attributes, such as skin and hair color, from the forensic sketch using deep neural net architecture, Conditional version of Generative Adversarial Network (CGAN).

System Overview

Our system is based on Conditional GAN(CGAN) as it is worth revisiting one of the critical reasons that cause GAN training and design difficulties: the freedom from simple constraints. It is indeed possible to solve some of the problems with GAN by adding constraints. Moreover, additional restrictions have extended its application. So we use conditional generative adversarial nets (conditional GAN or CGAN) proposed by Mriza in which by adding category constraints to GAN impelled the training process to become more stable and the final result more multiform [2]. In the existing work the loss setup is more close to original GAN which equates to minimizing the Pearson X² divergence. The reason for this has to do with the fact that a log loss will basically only care about whether or not a sample is labeled correctly or not. It will not heavily penalize based on the distance of said sample from correct classification. If a label is correct, it doesn’t worry about it further. In contrast, L2 loss does care about distance [6].

Previous approaches have found it beneficial to mix the GAN objective with a more traditional loss, such as L2 distance. The discriminator’s job remains unchanged, but the generator is tasked to not only fool the discriminator but also to be near the ground truth output in an L2 sense. But here we have explored using L1 distance rather than L2 as L1 encourages less blurring

Fully Convolutional GAN Discriminator

The discriminator used in this model is another unique component to this design. The fully convolutional discriminator works by classifying individual (N x N) patches in the image as “real vs. fake”, opposed to classifying the entire image as “real vs. fake”. The structure looks a lot like the encoder section of the generator, but works a little differently. The output is a 30×30 image where each pixel value (0 to 1) represents how believable the corresponding section of the unknown image is. In our implementation, each pixel from this 30×30 image corresponds to the believability of an overlapping small patches of the input image.

Training of Model

To train this network, there are two steps: training the discriminator and training the generator.

1. Training of the Discriminator :

The process of training the discriminator consists of minimizing sum of two types of losses. The first loss is the fake loss, which is the binary cross entropy loss between the result of the discriminator having sketch from dataset and photo generated by the generator using corresponding sketch from the dataset as input labeled false. And the second loss is similarly real loss, which is again the binary cross entropy loss between the results of the discriminator but in this case the input of the discriminators are both the paired dataset of sketch and photo labeled true. Simply these both type of losses are considered for the discriminator to be able to distinguish between a acceptable generated photo and not acceptable generated photo. The sum of these losses are back propagated and optimized using Adam optimizer.

2. Training of the Generator

The main aim of generator is to create an image which can fool the discriminator. So the loss here is the binary cross entropy loss between the result of the discriminator having sketch from dataset and photo generated by the generator using corresponding sketch from the dataset as input labeled true. Along with the binary cross entropy loss the L1 loss is also considered between the real photo from the dataset and the generated photo from the generator. The sum of these losses are back propagated and optimized using Adam optimizer.

Application of Trained Model

The discriminator part of this architecture is only used for the training purpose of the generator. Once the training process is done the discriminator serves no purpose. Just the generator part of the GAN is used for generation of the resultant images. The desired images are generated as per the condition or input in the generator model.

Result and Analysis

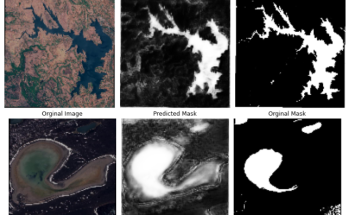

We designed and implemented a system which generates photograph from corresponding sketch. Inorder to implement our model we collected dataset from CUHK Face Sketch Database which contain 400 photo-sketch paired images, which was then divided into train and test sets in 70%-20% respectively. The output of the trained model wrapped onto a simple UI form where user can upload sketch file and the respective converted photo will be displayed alongside as follows:

Analysis

After training our model with the dataset for 700 epochs with batch size as 1 we minmized the generator loss to 0.86 and discminator loss to 0.62. The overall performance of the system was found almost seamless when the sketches of the ethnic group we trained our model with were provided as input. However, when the datasets with different ethnicity were provided to the system, the sytem couldn’t properly map the sketch to photograph that we expect it to be.

The most common ways to evaluate the GAN network is to either show the visual output as in the original GAN paper or by showing on how well they do for semi-supervised learning. The visual output of our system is fine-tuned and is distinguishable as a person’s image which properly maps the facial attribute without compromising even the little information provided by its respective sketch. Thus, our system can best be analyzed only by looking at the output details that one hopes to find in a photograph of a person.

Conclusion

Generative Adversarial Network (GANs), since its introduction, has been a very popular model in image to image translation, the same domain where our project’s system falls in. Different versions of this Adversarial Network have been implemented in different fields where our model used Conditional Generative Adversarial Network (CGAN) in particular in order to generate photorealistic image of the respective forensic sketch.

Datasets were collected from various sources enlisted in reference. They were used to train the network such that the network cleverly framed the image generation task as a supervised learning (with two sub-models) problem rather than unsupervised learning which in fact isn’t generative model’s nature. Thus, the model was finally capable of learning the regularities in data set while still being tightly constrained to the conditioned input. The project has successfully satiated its whole total objective of imparitng complete facial attribute of a person into the photograph, including hair, skin color etc from the sketch without compromising facial orientation and structure constraint of corresponding sketch.

Our project eradicates the traditional approach of employing Photoshop tools to convert sketch into photo realistic image that removes the difficulty faced during the recognition of suspect when only sketch was provided for the same task. The time it requires to do the same using traditional methods is also high along with the cost associated, which questions the overall efficiency of the system being used. This problem has been addressed properly by our system and taking time elapsed along with cost as one of the top priority, our system can generate the photorealistic image of corresponding sketch in a matter of seconds with simple UI that eliminates any need of professionals to operate the system.

Bibliography and References:

- 1. Ian J. Goodfellow, Jean Pouget-Abadie∗, Mehdi Mirza, Bing Xu, David WardeFarley,Skherjil Ozair†, Aaron Courville, Yoshua Bengio, Generative Adversarial Nets , Department d’informatique et de recherche op ´ erationnelle ´Universite de Montr ´ eal ´,Montreal (https://arxiv.org/pdf/1406.2661.pdf) Accessed on: 17 May 2019 2:00 PM

- Mehdi Mirza Conditional Generative Adversarial Nets, Departement d’informatique et de recherche op ´ erationnelle ´Universite de Montr ´ eal ´, Montreal (https://arxiv.org/pdf/1411.1784.pdf) Accessed on: 17 May 2019 2:00 PM

- Jun-Yan Zhu∗ Taesung Park∗ Phillip Isola Alexei A. Efros, Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks, Berkeley AI Research (BAIR) laboratory, UC Berkeley(https://arxiv.org/pdf/1703.10593.pdf) Accessed on: 17 May 2019 2:00 PM

- Olaf Ronneberger, Philipp Fischer, and Thomas Brox, U-Net: Convolutional Networks for Biomedical Image Segmentation, Computer Science Department and BIOSS Centre for Biological Signalling Studies, University of Freiburg, Germany (https://arxiv.org/pdf/1505.04597.pdf) Accessed on: 17 May 2019 2:00 PM

- Introduction to Generative Adversarial Networks (GANs): Types, and Applications, and Implementation(https://heartbeat.fritz.ai/introduction-to-generative-adversarialnetworks-gans-35ef44f21193) Accessed on: 19 May 2019 8:30 AM

- Phillip Isola Jun-Yan Zhu Tinghui Zhou Alexei A. Efros, Image-to-Image Translation with Conditional Adversarial Networks Berkeley AI Research (BAIR) Laboratory, UC Berkeleyj (https://arxiv.org/pdf/1611.07004.pdf) Accessed on: 19 May 2019 9:00 AM

- CUHK Face Sketch Database(mmlab.ie.cuhk.edu,hk/facesketch.html) Accessed on: 19 may

This page is contributed by Kixor & his team . If you like AIHUB and would like to contribute, you can also write an article & mail your article to itsaihub@gmail.com . See your articles appearing on AI HUB platform and help other AI Enthusiast.

Your means of telling all in this paragraph

is truly nice, every one can easily know it, Thanks a lot.

adreamoftrains webhosting

Wow, wonderful blog layout! How long have you been blogging for?

you made blogging look easy. The overall look of your site is great, as well as the content!

Like!! I blog frequently and I really thank you for your content. The article has truly peaked my interest.

where is the source code of this project

Hi are you a bscIt student and need any kind of study material or any help visit the website http://www.bschelp.com