Welcome to a new tutorial on Decision Trees from scratch. The Machine Learning from Scratch series is our fourth algorithm, where we have covered all mathematical concepts and a project from scratch with detailed explanations.

INTRODUCTION

A decision tree is essentially a series of if-then statements that, when applied to a record in a data set, results in the classification of that record. Therefore, once you’ve created your decision tree, you can run a data set through the program and get a classification for each record within the data set. What this means to you, as a manufacturer of quality widgets, is that the program you create from this article will be able to predict the likelihood of each user, within a data set, purchasing your finely crafted product.

A decision tree is a supervised learning algorithm (having a pre-defined target variable) mostly used in classification problems. It works for both categorical and continuous input and output variables. In this technique, we split the population or sample into two or more homogeneous sets (or sub-populations) based on the input variables’ most significant splitter/differentiator.

LET’s MAKE IT SIMPLER

A decision tree is a clever tool that helps computers make choices by asking questions. Imagine you have a box of fruits – apples, oranges, and bananas – but you can’t see what’s inside. You want the computer to guess the fruit in the box without peeking. A decision tree is like a game of “20 Questions” you play with the computer to figure out the fruit.

It all starts with a simple question: “Is it red?” This question helps split the fruits into two groups: the red ones and the non-red ones. Now, you have two smaller groups to work with.

But you’re not done yet! For each of these groups, you ask more questions. For the red group, you might ask, “Is it round?” This further divides the red fruits into two more groups: round and non-round. You keep asking more questions and dividing the fruits into smaller and smaller groups until you can confidently say what’s in the box. For instance, you might reach a group that contains only red, round fruits, and that’s most likely apples.

This process of asking questions and splitting into groups is what a decision tree does. It helps you classify the fruit in the box based on your questions. If you follow the questions and arrive at the group of red, round fruits, the computer will tell you, “It’s an apple!”

Now, let’s relate this to real-life situations. Suppose you have a lemonade stand, and you want to know if people walking by will buy lemonade. You can use a decision tree by asking questions like “Is it a hot day?” (if yes, more people might want lemonade), “Is it cloudy?” (if yes, maybe fewer people will want lemonade), “Are there many people walking by?” (if yes, more potential customers), and “Is the lemonade price high or low?” (price might affect sales).

By answering these questions and following the branches of your decision tree, you can predict if you’ll sell a lot of lemonade or just a little. In the widget manufacturing example, you’d ask questions about potential customers, like their age, location, and past buying history, and the decision tree helps you predict whether they’re likely to buy your widgets or not.

So, in simple terms, a decision tree is like a smart game of questions that helps computers make predictions or decisions based on the information you have. It’s a useful tool for solving all sorts of problems, from guessing fruits to running a business!

HOW DECISION TREE WORKS ?

A decision tree is a hierarchical decision-making tool that starts with a root node representing an initial question or choice and branches into child nodes based on specific criteria, recursively guiding decisions until reaching leaf nodes that provide outcomes or classifications. Each node contains conditions for data splitting, allowing the tree to segment data effectively. Decision trees are widely used in fields like machine learning and data analysis for tasks such as classification and regression, offering interpretable rules and the ability to handle missing data while simplifying complex decision-making processes into a visual and easily understandable structure.

Decision Tree Algorithm Pseudocode

- 1. Place the dataset’s best attribute at the tree’s root.

- 2. Split the training set into subsets. Subsets should be made so that each subset contains data with the same value for an attribute.

- 3. Repeat step 1 and step 2 on each subset until you find leaf nodes in all the tree branches.

Decision Tree classifier

In the context of decision trees, predicting a class label for a given record begins at the tree’s root. At this point, we compare the attribute value of the root node with the corresponding attribute value of the record in question. Based on this comparison, we follow the branch corresponding to the observed value, moving to the next node in the tree. This process continues as we compare the attribute values of our record with those of other internal nodes in the tree until we finally arrive at a leaf node that provides the predicted class value. It’s worth noting that understanding how to create the decision tree model is essential, as it enables us to harness the tree’s predictive capabilities effectively.

Assumptions while creating Decision Tree

Below are some of the assumptions we make while using the Decision tree:

- 1. Initially, the whole training set is considered the root.

- 2. Feature values are preferred to be categorical. If the values are continuous, they are discredited before building the model.

- 3. Records are distributed recursively based on attribute values.

- 4. Order to place attributes as root or internal nodes of the tree is done by using some statistical approach.

HOW TO SPLIT NODES?

There are a few algorithms to find an optimum split. Let’s look at the following to understand the mathematics behind it.

Entropy

Entropy is a concept used in decision tree algorithms, particularly in splitting nodes to create a decision tree. Entropy is a measure of impurity or disorder within a dataset, and it helps decision trees determine how to effectively split data into child nodes. The entropy of a set of probabilities is:

Entropy measures the uncertainty or disorder in the node. If the data in the node is perfectly pure (e.g., all examples belong to one class), the entropy is 0. The entropy is higher if the data is evenly split among multiple classes, indicating more disorder.

Suppose we have a set of binary responses from some variable, all positive/true/1. In that case, knowing the values of the variable does not hold any predictive value for us since all the outcomes are positive. Hence, the entropy is zero.

Select a feature (attribute) and its values to create child nodes to split the data. For each possible split, you calculate the weighted average of the entropies of the resulting child nodes. This is known as “information gain.” Information gain quantifies how much the entropy decreases after splitting a node using a particular feature. Higher information gain implies a more effective split. Decision tree algorithms aim to maximize information gain when choosing how to split nodes. The algorithm selects the split with the highest information gain, meaning it reduces the disorder in the child nodes the most. This process continues recursively, creating new internal nodes and branches until certain stopping criteria are met (e.g., a maximum tree depth or a minimum number of data points in a node.

Information Gain (IG) = Entropy(Original Node) – Weighted Sum of Entropy(Child Nodes

By using entropy and information gain, decision tree algorithms efficiently partition the data into subsets, allowing the tree to learn and make predictions about the target variable, whether it’s classification (e.g., predicting a category) or regression (e.g., predicting a numerical value). The goal is to create a tree structure that minimizes uncertainty and maximizes predictive accuracy.

Gini index

The Gini index, also known as the Gini impurity or Gini coefficient, is another measure used in decision tree algorithms to evaluate the impurity or disorder of a dataset. It quantifies the likelihood of misclassifying a randomly chosen element in the dataset if labeled randomly according to the class distribution. In decision trees, the Gini index helps determine how to split data effectively by selecting the attribute that minimizes the impurity in the resulting child nodes.

The Gini index is simply the expected error rate:

- 1. To build the decision tree, select a feature (attribute) and its associated values to split the data into child nodes. For each possible split, you calculate the Gini index for the resulting child nodes.

- 2. After computing the Gini index for each child node, you calculate a weighted average of these indices. The weights are determined by the relative sizes (number of data points) of the child nodes.

- 3. The attribute and split that minimize the weighted Gini index are chosen as the best split. This means that the selected split reduces the impurity in the child nodes the most effectively.

- 4. The decision tree algorithm repeats this process recursively, selecting the best splits at each internal node to build the tree. This continues until specific stopping criteria are met, such as reaching a maximum tree depth or a minimum number of data points in a node.

The Gini index is an alternative to entropy for measuring impurity in decision tree algorithms. Decision tree algorithms aim to select the attribute and split that minimizes the Gini index, as this indicates the most effective reduction in impurity and, consequently, the creation of more homogeneous child nodes. Overall, the Gini index helps decision trees make informed decisions about how to partition data to create an accurate predictive model.

ID3

ID3 uses the concept of entropy (a measure of impurity) and information gain to decide how to split the dataset into subsets. It calculates the entropy of the dataset and then calculates the information gain for each feature (attribute) by splitting the data based on that attribute. Information gain quantifies how much the attribute reduces the uncertainty in classifying data points. ID3 selects the feature that provides the highest information gain as the best attribute to split the dataset. This means that the selected attribute results in the most significant reduction in uncertainty about the class labels of data points.

Once the best attribute is chosen, the dataset is split into subsets based on the values of that attribute. Each subset becomes a child node in the decision tree.The algorithm recursively repeats the process for each child node, calculating entropy, information gain, and selecting the best attribute for splitting. This process continues until specific stopping criteria are met, such as reaching a maximum tree depth, having a minimum number of data points in a node, or when all data points in a node belong to the same class.

When a stopping criterion is met or no further improvement in information gain is possible, the algorithm designates a node as a leaf node. Leaf nodes represent the final classification decision. If all data points in a leaf node belong to the same class, it’s a pure leaf; otherwise, the majority class is assigned.

After the tree is constructed, some implementations of ID3 may include a pruning step to remove branches that do not significantly contribute to classification accuracy. This helps avoid overfitting, where the tree is too complex and may not generalize well to new, unseen data.

One limitation of ID3 is that it cannot handle continuous (numerical) attributes directly. It works best with categorical attributes. Variations of ID3, such as C4.5, have been developed to handle numerical attributes and offer additional features and improvements. Despite its limitations, ID3 laid the foundation for many decision tree algorithms used in machine learning today, making it an important historical contribution to the field.

See detail about ID3 here

Github Link of Decision tree from scratch is provided at the end of this article !!

DECISION TREE USING SKLEARN

To proceed, you need to import all the required libraries that we require in our further coding. Here we have imported graphviz to visualize decision tree diagram. This is can install in conda environment using conda install python-graphviz .

import numpy as np

import pandas as pd

from sklearn.tree import export_graphviz

import IPython, graphviz, re

RANDOM_SEED = 42

np.random.seed(RANDOM_SEED)We’re going to use the iris dataset. The task for us is now to find the best “way” to split the dataset such that best nodes can be achieved.

df = pd.read_csv("iris.csv")

df['species_label'],_ = pd.factorize(df['species'])

y = df['species_label']

X = df[['petal_length', 'petal_width']]Now let’s define a class which draws a representation of a random forest in IPython. Source: https://github.com/fastai/fastai/blob/e6b56de53f80d2b2d39037c82d3a23ce72507cd7/old/fastai/structured.py#L22

def draw_tree(t, df, size=10, ratio=0.6, precision=0):

s=export_graphviz(t, out_file=None, feature_names=df.columns, filled=True,special_characters=True, rotate=True, precision=precision)

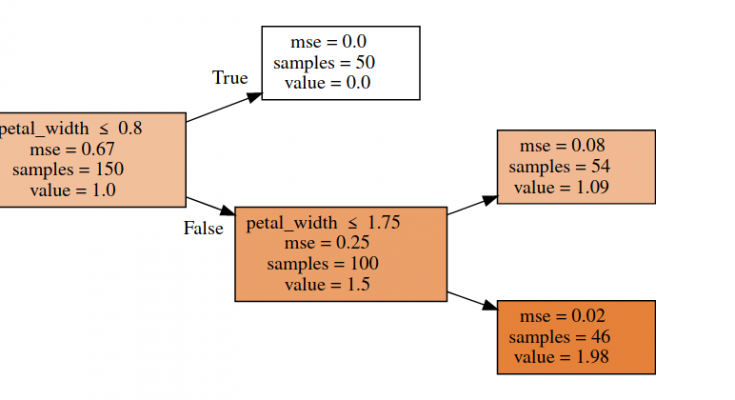

IPython.display.display(graphviz.Source(re.sub('Tree {',f'Tree {{ size={size}; ratio={ratio}', s)))We are using RandomForestRegressor with one estimator, which means we’re using a Decision Tree model. Here is the tree structure of our model:

from sklearn.ensemble import RandomForestRegressor

reg = RandomForestRegressor(n_estimators=1, max_depth=2, bootstrap=False, random_state=RANDOM_SEED)

reg.fit(X, y)

draw_tree(reg.estimators_[0], X, precision=2)

Decision tree models can be used for both classification and regression. The algorithms for building trees break down a data set into smaller and smaller subsets while, at the same time, an associated decision tree is incrementally developed. The final result is a tree with decision nodes and leaf nodes. A decision node has two or more branches. The leaf node represents a classification or decision (used for regression). A root node is the topmost decision node in a tree that corresponds to the best predictor (the most important feature). Decision trees can handle both categorical and numerical data.

Finally, let’s look at how we use all this to make predictions.

y_pred = dtree.predict(X_test)

count_misclassified = (y_test != y_pred).sum()

print('Misclassified samples: {}'.format(count_misclassified))

accuracy = metrics.accuracy_score(y_test, y_pred)

print('Accuracy: {:.2f}'.format(accuracy))

Output:

Misclassified samples: 2

Accuracy: 0.96

Cross-Validation

Cross-Validation is a technique that involves reserving a particular sample of a data set on which you do not train the model. Later, you test the model on this sample before finalizing the model.

Here are the steps involved in cross-validation:

- You reserve a sample data set.

- Train the model using the remaining part of the data set.

- Use the reserve sample of the data set test (validation) set. This will help you to know the effectiveness of model performance. If your model delivers a positive result on validation data, go ahead with the current model. It rocks!

OVERFITTING

Overfitting is a practical problem while building a decision tree model. The model is having an issue of overfitting is considered when the algorithm continues to go deeper and deeper in to reduce the training set error but results in an increased test set error i.e., the Accuracy of prediction for our model goes down. It generally happens when it builds many branches due to outliers and irregularities in data.

Two approaches that we can use to avoid overfitting are:

- Pre-Pruning

- Post-Pruning

Pre-Pruning

In pre-pruning, it stops the tree construction a bit early. It is preferred not to split a node if its goodness measure is below a threshold value. But it’s difficult to choose an appropriate stopping point.

Post-Pruning

In post-pruning first, it goes deeper and deeper in the tree to build a complete tree. If the tree shows the overfitting problem then pruning is done as a post-pruning step. We use a cross-validation data to check the effect of our pruning. Using cross-validation data, it tests whether expanding a node will make an improvement or not.

If it improves, then we can continue by expanding that node. But if it shows a reduction in accuracy, then it should not be expanded i.e., the node should be converted to a leaf node.

Decision Tree Algorithm Advantages and Disadvantages

Advantages:

- Decision Trees are easy to explain. It results in a set of rules.

- It follows the same approach as humans generally follow while making decisions.

- The interpretation of a complex Decision Tree model can be simplified by its visualizations. Even a naive person can understand logic.

- The Number of hyper-parameters to be tuned is almost null.

Disadvantages:

- There is a high probability of overfitting in the Decision Tree.

- Generally, it gives low prediction accuracy for a dataset compared to other machine learning algorithms.

- Information gain in a decision tree with categorical variables gives a biased response for attributes with greater no. of categories.

- Calculations can become complex when there are many class labels.

Your means of explaining the whole thing in this post is actually

fastidious, every one be able to easily understand it, Thanks

a lot. adreamoftrains website host